My mentors have shown me what makes for a good presentation, and now I can’t

un-see the fact that most presentations are just awful. After reading this post

you won’t be able to un-see it either - and you’ll learn how to stand out from

the crowd by making an excellent presentation instead.

It’s easy to make a bad presentation on accident

The first reason that most presentations are boring is that for most people it’s

easier and more intuitive to make a boring presentation than it is to make an

exciting one. For example, many people think it’s a good idea to end their talk

with a “Questions” slide. This seems natural: many presentations end with a Q&A

session, so it “makes sense” to have a slide indicating that the Q&A session has

begun. In reality though, such “Questions” slides are utterly useless. I explain

why in more detail in the

next section, however the biggest

reason is that “Questions” slides get the longest screen time of any of the

slides of a talk while simultaneously providing no actual content. Instead, a

far better approach is end one’s talk with a well-organized conclusion slide

that advertises oneself while simultaneously reiterating their presentation’s

key takeaways. This is better because it reminds the audience who the presenter

is and why the listeners should care about what they just said, and gives people

a foothold for asking questions about the work. It’s of course much harder to

make a proper conclusion slide though, so that’s one reason why they are less

commonly seen.

The next (and perhaps more important) reason that people make inferior

presentations is that they were simply never taught how to make superior ones.

Again, most people don’t know how to make good presentations to begin with, and

so most therefore aren’t qualified to give advice on how to compose a fine

presentation. This leads to a positive feedback loop where the blind lead the

blind, and in the end hardly anyone knows how to put together a decent set of

slides!

At this point you’re probably thinking, “OK genius, so if most presentations

suck, how about you stop complaining about them and tell me how to make one that

doesn’t?” The answer is simple: Just watch the Simon Peyton Jones

talk on how to give a great

presentation. That’s it, just watch that talk and you’ll be a certified grade-A

presenter!

…Just kidding of course. Don’t get me wrong, that talk is really good, and you

should try to follow most of SPJ’s presentation advice most of the time.

However, his talk is very high-level, and for people who are absolute beginners

at giving talks (and I contend that most people are), there is not enough

concrete actionable advice to follow. Simply put, SPJ leaves out many of the

finer, more granular details on how to forge and deliver a captivating

presentation. So here’s my (opinionated) list of things to do to make a

magnificent presentation that will earn you the recognition you deserve for all

your hard work!

Tenets for making excellent presentations

Go light on the text, heavy on the visuals

You should strive to have as few words on your slides as possible without

sacrificing clarity because it will make it easier for people to pay attention

to and understand your talk. Any words on your slides will compete with you for

your audience’s attention, so by having fewer words on your slides, your

audience will more easily be able to focus on you and what you are saying. This

also makes it easier for the audience to understand what you are saying as well,

because their attention won’t be divided between you and your slides.

On the other hand, you should go out of your way to add fun, simple visuals to

your talk to help explain or complement what you are saying. You can leverage

the old adage, “a picture is worth a thousand words” to your advantage by using

images to explain complex concepts clearly and concisely. The correct image can

help the audience comprehend what you are trying to say far faster than text

can, and without nearly as much cognitive overhead on the listener’s end. This

means that they’ll understand what you are trying to say more quickly and

easily, and as a result will be more likely to keep listening to you.

I recommend using flaticon for images.

Dr. Kevin Moran (the winner of the 2024 ACM SigSoft

Early Career Researcher Award) recently recommended it to me, and I had great

success when I used it to make my

ICSE 2024 talk.

“But Brent,” you may be thinking, “how can I explain my super complex

presentation topic without words?” This brings me to my next point…

Treat your talk like a sales pitch

Craft your presentation as if you were making a high-level (i.e., not very

detailed) advertisement to deliver to potential investors of your work. The

point of the talk is not to explain every little detail about your work, but

to excite the audience and encourage them to learn more about it (e.g. by

collaborating with you, reading your paper, buying you product, or asking you

questions). For instance, if you were to give a talk about a new battery you

invented, you would just say “You can save money by buying my batteries, because

they last 40% longer than other batteries and thus don’t need to be replaced as

often.” You would want to avoid talking about the specifics as to how your

batteries manage to last so long, since your audience more than likely would not

care about these details.

Put another way: don’t tell the audience all the cool things about your

idea/product/technique, and expect them to realize on their own why it’s a great

piece of work that they should care about. Instead, just tell the audience why

they should care about your great new idea, and provide a very brief intuition

as to how it works. Furthermore, by omitting such details from your main

presentation, you give the audience very obvious questions to ask that you can

more easily prepare for. Speaking of which…

Anticipate and prepare for questions

Predict the sorts of questions your audience will ask ahead of time and prepare

to answer them. This will show the audience that you are knowledgable about your

presentation subject, and earn you more of their respect. One great way to do

this is to prepare a first draft of your slides with too much detail, and as you

refine your presentation, gradually cut unnecessary details out of the main talk

and move them to an extra section after your talk’s conclusion solely for

answering questions about these details. It may feel like extra work to prepare

slides that may never get used if the audience doesn’t ask the questions you

expect them to ask, but if you’re going to cut the content out of the slides

anyway it doesn’t require much effort to just append them to the end of the

presentation instead. Moreover, with judicious content pruning you can subtly

guide the audience to ask the exact questions you want them to ask by leaving

seemingly obvious omissions from the main presentation. This must be done

carefully though, otherwise you run the risk of leaving too many apparently

obvious details out of your talk and looking like a fool. Unfortunately I don’t

have any concrete advice on the best way to do this (yet).

While all presenters should prepare for questions, the approach of putting extra

slides for questions at the end of your presentation may not work so well if you

plan to accept questions in the middle of your talk instead of waiting to take

questions at the end. This is because you should…

Only move forward

You should never go back to a previous slide while giving your talk because

doing so makes it harder to follow what you are saying. People will understand

your presentation more easily if it flows smoothly from one slide to the next.

Furthermore, if you need to go back to a previous slide to explain something on

a later slide, that suggests that your slides were not prepared in the

appropriate order to begin with. Astute members of the audience will notice

this, suspect that you don’t really know what you are talking about, and choose

to stop listening to you. To prevent this from happening you should prepare and

practice presenting your slides from start to finish without stopping or going

back. The first step to doing this is to…

Nail the first impression

If your presentation is an advertisement for your work, then your first slide is

the advertisement for the advertisement. It serves two purposes: to inform and

intrigue. When creating the first slide, be sure to include the obvious details

such as the title of the work, the names of the authors (perhaps accompanied by

images of them) and their institutions, the names of any agencies that funded

the work, and the presentation venue and date. This helps people attending your

talk confirm that they are in the right room, and makes it easier for others to

find your talk online in the future. If the first author is not the one giving

the talk, make that clear on the title slide as well by writing the name of the

author presenting the work in bold and by including images of all the works’

authors (assuming space allows for this).

When you present your first slide do not say the name of your talk, because the

title of your talk is on the first slide anyway and your audience (presumably)

can read. This advice is even more important to follow if you are presenting at

a conference because the session organizer will probably read the title of your

talk before you even begin presenting as well. Instead of reading the title of

your talk, introduce yourself and give a very brief overview of what you will be

talking about. For example, when I gave my ICSE 2024 talk I did not say, “Hello

everyone, today I’m presenting the work Semantic Analysis of Macro Usage for

Portability”. I began my talk by saying, “Hi everyone, my name is Brent, I am a

PhD student at the University of Central Florida, and today I’m excited to talk

with you all about macros”. You want to garner the audience’s interest early on

and build “attention momentum” (a phrase I just made up and am already thinking

about patenting), so that they’ll be willing to focus on your whole talk without

losing interest. Show the audience how much you care about what you’re about to

talk about, and it can rub off on them. In the words of

Dale Carnegie,

the best way to be interesting is to be interested.

If you tend to get nervous when presenting in front of crowds, then one way to

overcome this apprehension is to memorize the first few sentences of your

presentation. That way you’ll crush your first few slides, and feel pretty

confident going into the rest of the talk. When you get to your slides that you

haven’t entirely memorized though, just be careful that you…

Do not read off your slides

This will totally obliterate your audience’s interest in what you are saying. If

your slides already say everything you are going to say, then the audience may

no longer feel the need to listen to you since they can just read your slides

instead. Some members of the audience may try to keep listening to you, but they

will likely have a hard time doing so because their attention will be split

between your written words and your spoken words.

Also, reading directly off slides is just a lazy way to present that will almost

certainly annoy your audience. It doesn’t require practice and turns your talk

into a monotonous lecture. This is another reason why your slides should have a

minimal amount of text. People

don’t want to hear you go on and on about something, they want you to…

Get to the point

Try to state the core idea behind your work, and why your audience should care

about it, as quickly and concisely as possible. Your audience almost certainly

does not care about how your new solar technology converts photons into

electricity. They almost certainly will care about how cheap it is, how much

money it will save them on their electric bill, and how soon they can expect to

receive a return on investment once they buy it. If this sounds like advertising

that’s because it is. When you’re trying

to persuade someone to buy your product/read your paper/invest in your startup,

the first thing you need to ask yourself is “What’s in it for them? Why do they

care?” The sooner you answer those questions during your presentation, the

sooner you earn your audience’s attention.

Here’s a trick I use to figure out how to explain a topic quickly without

beating around the bush. First, I write a paragraph explaining the idea in a

fair amount of detail. I don’t focus on concision at all; my goal is just to

explain the topic as well as I can using as much text as I need. Once I’m done,

I go back and review the final sentence in that paragraph. More often than not,

that final sentence is the key idea I’m trying to convey to my reader or

listener. If it is, then I move that last sentence to the very beginning of my

explanation, and adjust the rest of my explanation to accommodate this change in

structure. When I do this I often find that many parts of my original paragraph

were entirely unnecessary to explain my key takeaway. I cut these useless parts

out, and the explanation becomes much simpler and more straightforward than

before.

After you’ve established the main idea behind your work and made it clear why

your audience should care, you can start to explain more of the context around

it (e.g., more background on the problem your idea solves and how it works). A

great way to do this is to…

Give examples

People are hard-wired to recognize patterns and learn best by example, so you

should furnish your talk with concrete examples to help explain how your work

solves a particular problem. Great problem examples not only provide context as

to why your work is important, but can also excite your audience to see how your

work solves the problem. On the other hand, don’t try to explain the insight

behind your idea first and then give an application of its usefulness, because

this can confuse and bore people.

For example, let’s say you are giving a talk on why math is important. What you

would not want to do is spend ten minutes explaining all the rules of

arithmetic, and then briefly mention vaguely that math is the cornerstone of

technological progress. What you would want to say is, “You can learn how to

budget more effectively and save money by using math”, or “In the 1960s humans

transcended the limitations of gravity and flew to space by using mathematical

formulas”. Then, after you’ve earned the audience’s interest with your stellar

examples, would you want to start explaining how arithmetic works.

You’re probably going to give a talk on a topic much more complex than the

importance of math, though, and won’t be able to use such simple and obvious

examples. That’s not a problem, because examples are also effective for

explaining complex ideas as well. To explain a complex topic, choose quality

examples over a quantity of them. Start with a simple example that doesn’t

illustrate all the complexities and edge-cases of your work, and then…

Introduce complexity gradually

To explain a complex idea, start with a simple, limited example of the idea, and

then slowly layer complexity on to it throughout your presentation. For

instance, let’s pretend I’m giving a talk on home winemaking (a recent hobby of

mine):

-

First, here’s the simplest example of how to make wine: Add grapes, water, and

yeast to a clean bucket, wait a few weeks, and bam, you have alcoholic fruit

juice. This works because yeast eat sugar (which grapes are full of) and turn

it into alcohol. This process is called fermentation.

-

Now let’s make a stronger wine with a higher alcohol-to-water ratio (i.e., a

higher ABV). To do this, add extra sugar to the bucket before fermentation

begins. Since the yeast will have more sugar to eat, they will produce more

alcohol. (Notice how I’m building off the prior example, which introduced the

fundamental concept of fermentation). Unfortunately, this creates a new

problem: there is a limit to how much alcohol yeast can live with, so our

yeast may actually die from the alcohol they are producing before our wine

reaches our desired ABV. No more yeast, no more alcohol, no stronger wine. (At

this point I’m introducing a complication to winemaking). To fix this, we can

use a strand of yeast with high alcohol tolerance. Such strands of yeast can

withstand higher levels of alcohol and continue to convert sugar into alcohol.

-

OK, we’ve got some strong wine, but now it tastes horrible. Let’s make it

sweeter. The obvious way to do this would be to simply add sugar to the wine

after it’s done fermenting. However, this won’t work because there will still

be yeast floating around in the fermented wine, and if we add more sugar to

it, the yeast will just turn it into more alcohol. (Notice how I’m building

off the concept of fermentation again. The difference between this example and

the last example though is that in the previous example, we used fermentation

to solve our problem and achieve a higher amount of alcohol, i.e. ABV, but in

this example, fermentation is the problem because we don’t want to increase

the ABV). To solve this problem, we can add chemicals to our wine to stop the

yeast from reproducing and prevent it from turning sugar into alcohol. Give

the chemicals a day to do work their magic, and then we can add as much sugar

as we want to our wine without worrying about our yeast turning it into more

alcohol. (Here I’ve solved the problem by adding just a tad more complexity,

with the introduction of chemicals to the winemaking process. Notice that I

did not mention the exact chemicals used. Depending on the talk, those details

may be unnecessary, or something I would like to push the audience to ask

about)

When preparing slides for your example, I recommend putting the most basic

example on one slide, and then adding “appear” animations to reveal the

complications. Here more than ever, it’s crucial that you use

images to introduce the

complications, and not text. Otherwise your example devolves into bullet points,

which are boring and annoying.

Provide full context when possible

Explain concepts as if your audience can’t remember anything but your key idea

(assuming you’ve already established it) and what’s visible on the current

slide. When speaking, always spell out acronyms and accompany technical terms

with their definitions. This is just another trick to reduce your audience’s

cognitive load. Save them the trouble of remembering what all your technical

jargon means so that they can focus on comprehending why it’s relevant to your

idea (and why your idea is relevant to them). Tread carefully here: although you

want to provide full context in your talk, you don’t want do this on your

slides. This is because cramming too much context on your slides results in

walls of text that impose a cognitive burden on your audience and distract them

from what you are saying.

There’s a very fine line between providing full context and providing too much

context. Give too little context, and your audience will be lost.

Give too much, and your audience will get confused. Either

way, they won’t understand you. To determine the right amount of context

necessary for your talk, you will need to…

Practice, practice, practice

Practice presenting often and with a variety of people. Practice presenting both

to people who do and do not have any background knowledge on what you are

talking about. Feedback from the uninformed audience can help you realize when

certain ideas which seem “obvious” to are not actually that obvious to your

audience (and thus require that you provide more

context when mentioning them). The

informed audience can give you a way to practice answering technical questions

about the work. If you’re lucky to have a mentor with good presentation skills,

then ask them how to make the presentation more concise and engaging.

When I gave my ICSE 2024 practice talk to my mentors at the University of

Central Florida (UCF), I learned more about how to put a fun spin on my ideas

and how to better sell myself during my presentation. For example, since my

advisor Dr. Paul Gazillo had a lot of background knowledge on the work, he was

able to recommend a few analogies I could use to better explain my research. On

the other hand, the other UCF faculty who only had general computer science

knowledge but little knowledge about my work were able to give me more general

presentation tips.

Meanwhile, when I practiced presenting my talk to my (very patient) girlfriend,

I learned how to convey complex ideas more concisely. Since my girlfriend has

very little computer science knowledge, she was willing to ask more “obvious”

questions about concepts that I implicitly assumed the audience would

understand, but apparently did not explain clearly enough. By presenting to a

non-technical audience, I improved at expressing my thoughts with minimal

technical jargon. I also had to learn how to not get mired down in the details

of my work, since that would also confuse her. In short, I had to learn how to

present using the right amount of context. This often required me to…

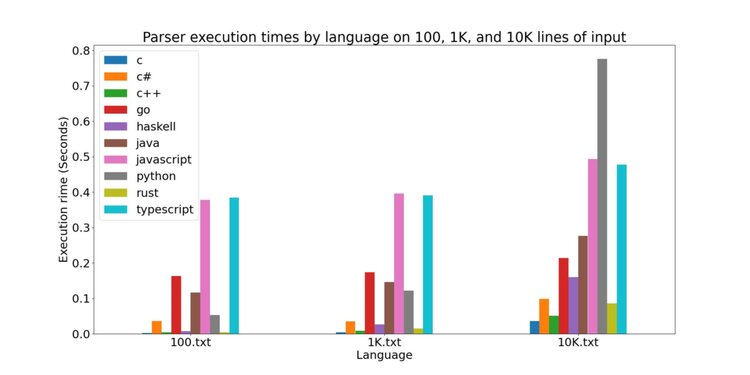

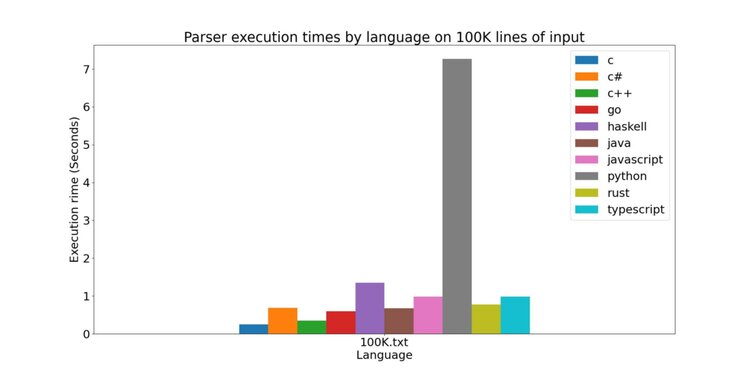

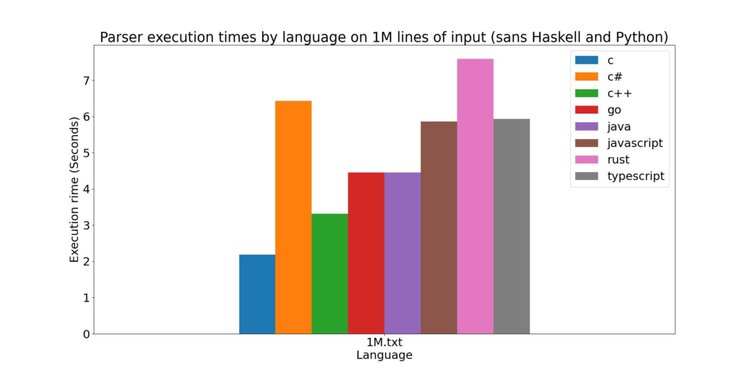

Highlight key points, and cut out the rest

Emphasize the important, exciting, and surprising parts of your work, and omit

everything else from your main talk that doesn’t serve this purpose. When you

present a figure with data (be it a table, chart, graph, code snippet,

whatever), ask yourself “What do I want the audience to glean from this?” If

there’s something in the chart that you can highlight to make this point

clearer, then do it! Do not try and make your audience read your table and

figure out on their own why the data explains how much better your work is than

prior work. Most of them won’t even try to. Just tell them instead.

Once you determine what the key part of your figure is and have highlighted it,

then consider removing the rest of the figure entirely and just leaving the

highlighted information on the slide. This will help you get to the

point when explaining the importance of your data. If you

have highlighted multiple parts of the same figure, see if you can use some sort

of average (i.e., mean, median, or mode) to summarize this information, and

replace the figure with this average. Make sure to leave the full figure in

after the end of your presentation though, since this can help you answer

questions.

Sometimes it can be useful to present a large, complex figure to convey just

how complicated and difficult the problem you are solving is. If you do this, my

only advice is to do so quickly. You don’t want to risk your audience actually

trying to read/understand the figure and getting distracted or confused. You

just want to shock them with it, and then take it away before they can think too

hard about it. If they really want to know more, they’ll ask you about it.

Stick the landing

End your talk with a slide that reiterates your main points, presents your photo

along with your contact info, and displays QR codes or short links to pages

where the audience can learn more about your work. When you reach your

conclusion slide don’t read anything on it; simply tell the audience that you

have finished your talk and are ready to accept questions. Don’t proceed to a

“Thank you” or “Questions” slide, because they provide no meaningful content to

your presentation. The last slide of your talk is likely to get more screen-time

than any other and is your last chance to sell yourself to your audience, so

don’t squander it!

Leave your conclusion slide up on the screen while you await questions so that

the audience can record your contact info and read and re-read your key points.

The audience may use these key points to form questions, so prepare

accordingly. If you need to go to an

extra slide to answer a question that’s fine; just try to jump back to your

conclusion slide afterward.

Finally, make sure to finish your talk ON TIME. If you run out of time in the

middle of your presentation, simply stop and say “I had more slides prepared,

but I am out of time and so will end now.” Don’t ask your audience for

permission to continue - they will likely feel bad and say sure, but believe me

they won’t appreciate you for taking more of their time. This is especially true

if your talk is the last one before a coffee or lunch break.

Be humble

Perhaps the most important advice I can give on how to improve at presenting is

to remain humble and accept the fact that the first few presentations you make

(even after armed with this advice) will likely suck. It can take weeks to

prepare an excellent talk, and you may need to throw out your first, second, and

third drafts before you arrive at something half-decent. That’s OK though - most

talks suck, so a half-decent talk is often all you need to stand out. Keep these

rules in mind when you attend your next presentation and you’ll see what I mean.

The good news is that as long as you pay attention to what you’re doing wrong,

and remain receptive to feedback, you will only continue to improve. It is

difficult, but not impossible, to make a great presentation that doesn’t suck.

]]>